進入研究室有幸玩到 DGX Spark 這個 AI 玩具,教授還買了兩台組成 DGX Spark Cluster

由於 Lab 最近進來兩台 DGX Spark 老師也買了串接線就來玩玩看這樣串起來有多猛瞜。

先看看這尊絕不凡的身形,裡面可是搭載著 Grace Blackwell 架構的 GB10 晶片阿!

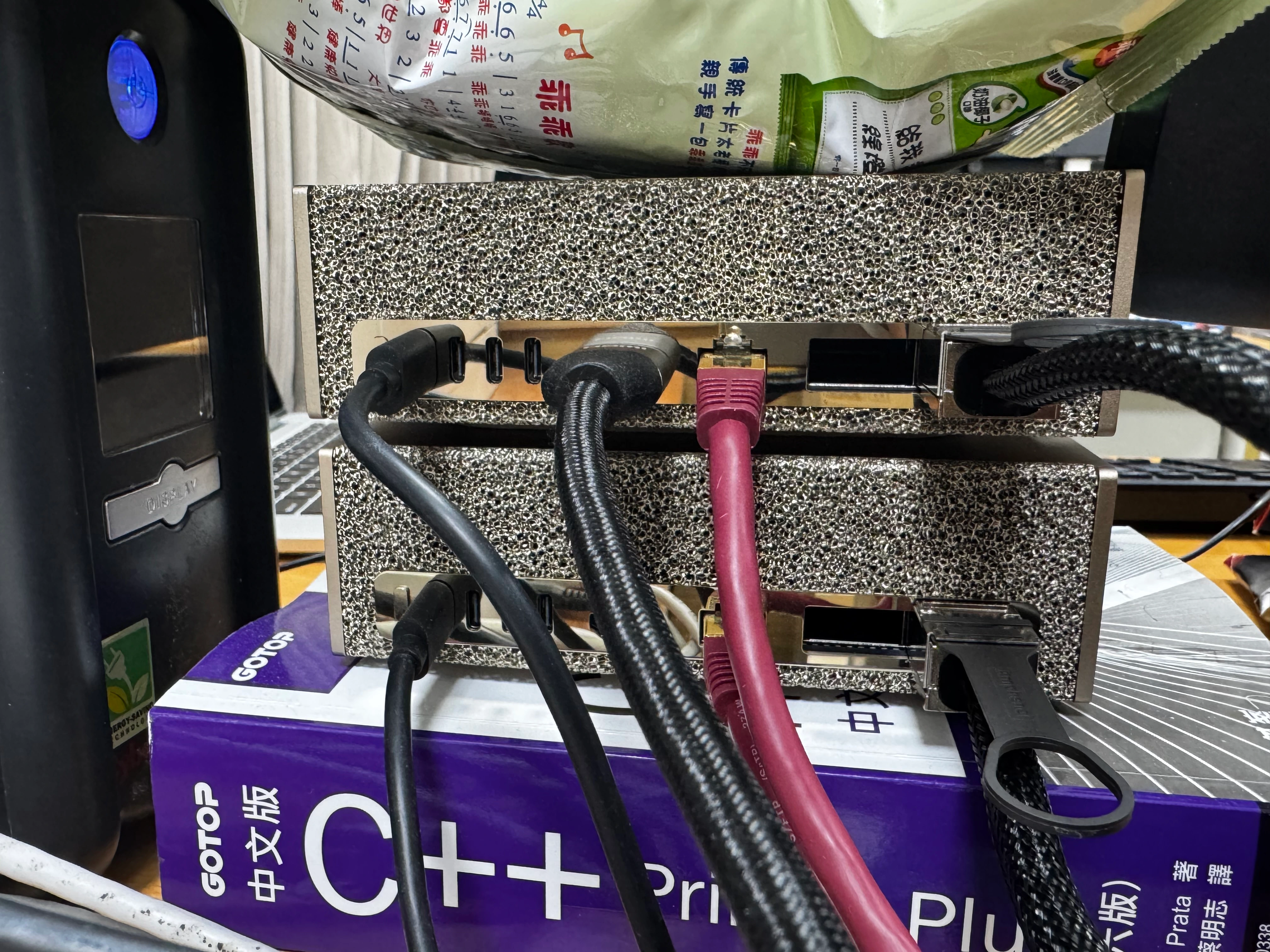

簡單又多工的後背,搭載 10GbE 與 ConnectX-7 高速網卡可達 400Gb/s

詳細架構請參考此文章 iThome - 採用GB10晶片,輝達與多家系統廠商推出新型態AI工作站

Step 0: #

- 請安裝完系統,且兩台要有相同 User

- 準備一條400G的QSFP線連接兩台

- 下載 discover-sparks.sh 探索InfiniBand網路腳本

- 由於我沒有特別設定 docker 權限在 user 上可以使用,所以以下步驟如果有使用到 docker 等指令權限不夠在前面加上 sudo 即可

Step 1: #

以下是依照 NVIDIA - Connect Two Sparks 的操作步驟

-

確認 User

要確認 node1 跟 node2 都有同個 user 喔!

# 使用 command 查詢 whoami -

確認 QSFP 是否有連接上

要確認有 UP 代表才有連接上,照理來說會是一組 UP 起來

# Check QSFP interface availability on both nodes nvidia@dxg-spark-1:~$ ibdev2netdev roceP2p1s0f0 port 1 ==> enP2p1s0f0np0 (Down) roceP2p1s0f1 port 1 ==> enP2p1s0f1np1 (Up) rocep1s0f0 port 1 ==> enp1s0f0np0 (Down) rocep1s0f1 port 1 ==> enp1s0f1np1 (Up) -

設定CX7網路介面

我這邊是選擇選項2方法,因為這樣設定靜態IP後,之後重啟後還是一樣的IP 以下 IP 可以設定成你喜歡的 IP,但切記不能與原本的網路相衝突,且盡量分開網斷。

Node1設定:

# Create the netplan configuration file sudo tee /etc/netplan/40-cx7.yaml > /dev/null <<EOF network: version: 2 ethernets: enp1s0f0np0: addresses: - 192.168.100.10/24 dhcp4: no enp1s0f1np1: addresses: - 192.168.200.12/24 dhcp4: no enP2p1s0f0np0: addresses: - 192.168.100.14/24 dhcp4: no enP2p1s0f1np1: addresses: - 192.168.200.16/24 dhcp4: no EOF # Set appropriate permissions sudo chmod 600 /etc/netplan/40-cx7.yaml # Apply the configuration sudo netplan applyNode2設定:

# Create the netplan configuration file sudo tee /etc/netplan/40-cx7.yaml > /dev/null <<EOF network: version: 2 ethernets: enp1s0f0np0: addresses: - 192.168.100.11/24 dhcp4: no enp1s0f1np1: addresses: - 192.168.200.13/24 dhcp4: no enP2p1s0f0np0: addresses: - 192.168.100.15/24 dhcp4: no enP2p1s0f1np1: addresses: - 192.168.200.17/24 dhcp4: no EOF # Set appropriate permissions sudo chmod 600 /etc/netplan/40-cx7.yaml # Apply the configuration sudo netplan apply -

設定免密碼 SSH 驗證

選擇一個node執行 discover-sparks.sh 探索InfiniBand網路腳本,他會自己找到 InfiniBand 介面 IP

bash ./discover-sparks預期輸出類似如下,但會顯示不同的 IP 位址與節點名稱。首次執行腳本時,系統將提示您輸入每個節點的密碼。

Found: 169.254.35.62 (dgx-spark-node1.local) Found: 169.254.35.63 (dgx-spark-node2.local) Setting up bidirectional SSH access (local <-> remote nodes)... You may be prompted for your password for each node. SSH setup complete! Both local and remote nodes can now SSH to each other without passwords. -

驗證多節點能互通

# Test hostname resolution across nodes ssh <InfiniBand_IP for Node 1> hostname ssh <InfiniBand_IP for Node 2> hostnameex: 以 Step.1 - 4 為例子就是長成以下的樣子

ssh 169.254.35.62 hostname ssh 169.254.35.63 hostname

Step 2: #

以下是依照 NVIDIA - NCCL for Two Sparks 的操作步驟

-

編譯支援 Blackwell 架構的 NCCL

請在兩個節點上執行以下指令!!# Install dependencies and build NCCL sudo apt-get update && sudo apt-get install -y libopenmpi-dev git clone -b v2.28.9-1 https://github.com/NVIDIA/nccl.git ~/nccl/ cd ~/nccl/ make -j src.build NVCC_GENCODE="-gencode=arch=compute_121,code=sm_121" # Set environment variables export CUDA_HOME="/usr/local/cuda" export MPI_HOME="/usr/lib/aarch64-linux-gnu/openmpi" export NCCL_HOME="$HOME/nccl/build/" export LD_LIBRARY_PATH="$NCCL_HOME/lib:$CUDA_HOME/lib64/:$MPI_HOME/lib:$LD_LIBRARY_PATH" -

建立 NCCL 測試套件

# Clone and build NCCL tests git clone https://github.com/NVIDIA/nccl-tests.git ~/nccl-tests/ cd ~/nccl-tests/ make MPI=1 -

找出正在使用的CX-7網路介面與 IP 位址

請在兩個節點上執行以下指令!!

請使用輸出結果中顯示為「(Up)」的介面。 在此範例中,我們將使用 enp1s0f1np1。

請忽略所有以 enP2p<…> 前綴開頭的介面,僅需考慮以 enp1<…> 開頭的介面。

# Check network port status ibdev2netdev範例輸出:

roceP2p1s0f0 port 1 ==> enP2p1s0f0np0 (Down) roceP2p1s0f1 port 1 ==> enP2p1s0f1np1 (Up) rocep1s0f0 port 1 ==> enp1s0f0np0 (Down) rocep1s0f1 port 1 ==> enp1s0f1np1 (Up)依照介面尋找 IP

ip addr show enp1s0f0np0 ip addr show enp1s0f1np1範例輸出:

# In this example, we are using interface enp1s0f1np1. nvidia@dgx-spark-1:~$ ip addr show enp1s0f1np1 4: enp1s0f1np1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000 link/ether 3c:6d:66:cc:b3:b7 brd ff:ff:ff:ff:ff:ff inet **169.254.35.62**/16 brd 169.254.255.255 scope link noprefixroute enp1s0f1np1 valid_lft forever preferred_lft forever inet6 fe80::3e6d:66ff:fecc:b3b7/64 scope link valid_lft forever preferred_lft forever -

執行 NCCL 通訊測試

僅需一條 QSFP 纜線即可實現完整頻寬。當連接兩條 QSFP 纜線時,必須為所有四個介面指派 IP 位址,方能獲得完整頻寬。# Set network interface environment variables (use your Up interface from the previous step) # UCX_NET_DEVICES, NCCL_SOCKET_IFNAME, OMPI_MCA_btl_tcp_if_include 都要設定成你使用的介面名稱。 export UCX_NET_DEVICES=enp1s0f1np1 export NCCL_SOCKET_IFNAME=enp1s0f1np1 export OMPI_MCA_btl_tcp_if_include=enp1s0f1np1 # Run the all_gather performance test across both nodes (replace the IP addresses with the ones you found in the previous step) mpirun -np 2 -H <Node1_CX7_IP>:1,<Node2_CX7_IP>:1 \ --mca plm_rsh_agent "ssh -o UserKnownHostsFile=/dev/null -o StrictHostKeyChecking=no" \ -x LD_LIBRARY_PATH=$LD_LIBRARY_PATH \ $HOME/nccl-tests/build/all_gather_perf您亦可嘗試將 NCCL 設定的緩衝區大小調大,以充分利用 200Gbps 頻寬。

# Set network interface environment variables (use your active interface) # UCX_NET_DEVICES, NCCL_SOCKET_IFNAME, OMPI_MCA_btl_tcp_if_include 都要設定成你使用的介面名稱。 export UCX_NET_DEVICES=enp1s0f1np1 export NCCL_SOCKET_IFNAME=enp1s0f1np1 export OMPI_MCA_btl_tcp_if_include=enp1s0f1np1 # Run the all_gather performance test across both nodes mpirun -np 2 -H <Node1_CX7_IP>:1,<Node2_CX7_IP>:1 \ --mca plm_rsh_agent "ssh -o UserKnownHostsFile=/dev/null -o StrictHostKeyChecking=no" \ -x LD_LIBRARY_PATH=$LD_LIBRARY_PATH \ $HOME/nccl-tests/build/all_gather_perf -b 16G -e 16G -f 2

補充: #

-

Setting CX-7 mtu

請在兩個節點上執行以下指令!!# Set MTU to 9000~9216 for all CX-7 interfaces # 設定 MTU 為 9000~9216 區間依照你後面接的設備設定 sudo ip link set enp1s0f0np0 mtu 9000 sudo ip link set enp1s0f1np1 mtu 9000 sudo ip link set enP2p1s0f0np0 mtu 9000 sudo ip link set enP2p1s0f1np1 mtu 9000 -

Check RDMA link

rdma link輸出範例:

link rocep1s0f0/1 state DOWN physical_state DISABLED netdev enp1s0f0np0 link rocep1s0f1/1 state ACTIVE physical_state LINK_UP netdev enp1s0f1np1 link roceP2p1s0f0/1 state DOWN physical_state DISABLED netdev enP2p1s0f0np0 link roceP2p1s0f1/1 state ACTIVE physical_state LINK_UP netdev enP2p1s0f1np1 -

RoCE test:

apt install perftest show_gids => 找到你的 interface 與 GID 我們只看 rocep*s*f*輸出範例:

DEV PORT INDEX GID IPv4 VER DEV --- ---- ----- --- ------------ --- --- rocep1s0f0 1 0 ....:....:....:....:....:....:....:.... v1 enp1s0f0np0 rocep1s0f0 1 1 ....:....:....:....:....:....:....:.... v2 enp1s0f0np0 rocep1s0f1 1 0 ....:....:....:....:....:....:....:.... v1 enp1s0f1np1 rocep1s0f1 1 1 ....:....:....:....:....:....:....:.... v2 enp1s0f1np1 rocep1s0f1 1 2 ....:....:....:....:....:....:....:.... 192.168.555.12 v1 enp1s0f1np1 rocep1s0f1 1 3 ....:....:....:....:....:....:....:.... 192.168.555.12 v2 enp1s0f1np1 roceP2p1s0f0 1 0 ....:....:....:....:....:....:....:.... v1 enP2p1s0f0np0 roceP2p1s0f0 1 1 ....:....:....:....:....:....:....:.... v2 enP2p1s0f0np0 roceP2p1s0f1 1 0 ....:....:....:....:....:....:....:.... v1 enP2p1s0f1np1 roceP2p1s0f1 1 1 ....:....:....:....:....:....:....:.... v2 enP2p1s0f1np1 roceP2p1s0f1 1 2 ....:....:....:....:....:....:....:.... 192.168.555.16 v1 enP2p1s0f1np1 roceP2p1s0f1 1 3 ....:....:....:....:....:....:....:.... 192.168.555.16 v2 enP2p1s0f1np1 n_gids_found=12#ib_send_bw:測試網路寬帶 # Node1 (Server): ib_send_bw -R -d rocep1s0f1 -x <GID> -R #ex: ib_send_bw -d rocep1s0f1 -x 3 -R # Node2 (Client): ib_send_bw <node1_CX7_ip> -d rocep1s0f1 -x 3 -R#ib_send_lat:測試網路延遲 # Node1 (Server): ib_send_lat -d rocep1s0f1 -x <GID> -R #ex: ib_send_lat -d rocep1s0f1 -x 3 -R # Node2 (Client): ib_send_lat <node1_CX7_ip> -d rocep1s0f1 -x 3 -R

Step 3: #

以下是依照 NVIDIA - vLLM for Inference 的操作步驟

-

下載 cluster deployment script

# Download on both nodes wget https://raw.githubusercontent.com/vllm-project/vllm/refs/heads/main/examples/online_serving/run_cluster.sh chmod +x run_cluster.sh -

從 NVIDIA NGC 拉取 vLLM docker images

docker pull nvcr.io/nvidia/vllm:25.11-py3 export VLLM_IMAGE=nvcr.io/nvidia/vllm:25.11-py3 -

啟動 Ray 叢集主節點。

此節點負責協調分散式推論,並提供 API 端點服務。

只在主節點(Node1)下指令即可!# On Node 1, start head node # Get the IP address of the high-speed interface # Use the interface that shows "(Up)" from ibdev2netdev (enp1s0f0np0 or enp1s0f1np1) export MN_IF_NAME=enp1s0f1np1 export VLLM_HOST_IP=$(ip -4 addr show $MN_IF_NAME | grep -oP '(?<=inet\s)\d+(\.\d+){3}') echo "Using interface $MN_IF_NAME with IP $VLLM_HOST_IP" bash run_cluster.sh $VLLM_IMAGE $VLLM_HOST_IP --head ~/.cache/huggingface \ -e VLLM_HOST_IP=$VLLM_HOST_IP \ -e UCX_NET_DEVICES=$MN_IF_NAME \ -e NCCL_SOCKET_IFNAME=$MN_IF_NAME \ -e OMPI_MCA_btl_tcp_if_include=$MN_IF_NAME \ -e GLOO_SOCKET_IFNAME=$MN_IF_NAME \ -e TP_SOCKET_IFNAME=$MN_IF_NAME \ -e RAY_memory_monitor_refresh_ms=0 \ -e MASTER_ADDR=$VLLM_HOST_IP -

將節點 2 連接至 Ray 叢集作為工作節點。

此舉可提供額外的 GPU 資源,以實現張量平行運算。

只在叢集節點(Node2)下指令即可!# On Node 2, join as worker # Set the interface name (same as Node 1) export MN_IF_NAME=enp1s0f1np1 # Get Node 2's own IP address export VLLM_HOST_IP=$(ip -4 addr show $MN_IF_NAME | grep -oP '(?<=inet\s)\d+(\.\d+){3}') # IMPORTANT: Set HEAD_NODE_IP to Node 1's IP address # You must get this value from Node 1 (run: echo $VLLM_HOST_IP on Node 1) export HEAD_NODE_IP=<NODE_1_CX7_IP_ADDRESS> echo "Worker IP: $VLLM_HOST_IP, connecting to head node at: $HEAD_NODE_IP" bash run_cluster.sh $VLLM_IMAGE $HEAD_NODE_IP --worker ~/.cache/huggingface \ -e VLLM_HOST_IP=$VLLM_HOST_IP \ -e UCX_NET_DEVICES=$MN_IF_NAME \ -e NCCL_SOCKET_IFNAME=$MN_IF_NAME \ -e OMPI_MCA_btl_tcp_if_include=$MN_IF_NAME \ -e GLOO_SOCKET_IFNAME=$MN_IF_NAME \ -e TP_SOCKET_IFNAME=$MN_IF_NAME \ -e RAY_memory_monitor_refresh_ms=0 \ -e MASTER_ADDR=$HEAD_NODE_IP -

驗證叢集狀態

確認兩個節點皆被 Ray 叢集識別且處於可用狀態。

只在主節點(Node1)下指令即可!# On Node 1 (head node) # Find the vLLM container name (it will be node-<random_number>) export VLLM_CONTAINER=$(docker ps --format '{{.Names}}' | grep -E '^node-[0-9]+$') echo "Found container: $VLLM_CONTAINER" docker exec $VLLM_CONTAINER ray status -

vllm 搭建 GPT-OSS-120B模型 vllm 參數都能自行調整,參數可查看此文章zhihu - vLLM参数详细说明

只在主節點(Node1)下指令即可!# On Node 1, enter container and start server export VLLM_CONTAINER=$(docker ps --format '{{.Names}}' | grep -E '^node-[0-9]+$') docker exec -it $VLLM_CONTAINER /bin/bash -c ' vllm serve openai/gpt-oss-120b \ --host 0.0.0.0 \ --port 8000 \ --tensor-parallel-size 2 \ --max_model_len 2048 \ --gpu-memory-utilization 0.9 -

確認服務

# Test from Node 1 or external client curl http://<node1_IP>:8000/v1/completions \ -H "Content-Type: application/json" \ -d '{ "model": "openai/gpt-oss-120b", "prompt": "Write a haiku about a GPU", "max_tokens": 32, "temperature": 0.7 }' -

部署完成!!

Note: #

-

看論壇說雙條200G可以能而外提供 52 Gbit/sec 速度。但我這邊是使用單條400G的線所以這部份沒有嘗試。

-

有關網路聚合的官方解釋

-

DGX Spark 上不支援 GPU Direct RDMA

ref:developer.nvidia - enabling-gpu-direct-rdma-for-dgx-spark-clustering

-

目前看起來都是玩家互相討論實作居多,官方很少出來澄清或是證明問題。

-

目前雙同道網路還是個謎,沒有人能成功

-

分別有 NCCL 跟 RoCE 通道測試,是不同的喔請注意!

-

如果遇到問題請多上官方討論區尋找

-

測試 項目 與 目的

項目 測什麼 解釋 層級 用途 iperf3 TCP throughput TCP / UDP socket throughput Network stack 普通網路 RoCE benchmark RDMA bandwidth NIC → NIC memory access, CPU 不參與 NIC transport HPC network NCCL test GPU collective bandwidth GPU ↔ GPU Application layer AI training

心得: #

跑 GPT-OSS-120b 在 vllm 上

-

單架構:

- 單人使用時: Latency: ~28.24 ms/token, TPS: ~35.4 token/sec

- 併發使用時: Latency: ~39.05 ms/token, TPS: ~25.61 token/sec

-

雙幾架構:

- 單人使用時: Latency: ~30.23 ms/token, TPS: ~33.08 tokens/sec

- 併發使用時: Latency: ~29.07 ms/token, TPS: ~34.40 token/sec

-

結論:

我們可以發現當單人使用時沒有太大差異但只要多人使用時 cluster 架構就展現分片計算的優勢大幅降低延遲時間。

- 平均生成速率 (TPS):由 25.61 提升至 34.40 token/sec,效能增長約 34.3%。

- 系統延遲 (Latency):顯著降低了約 25.6%,大幅提升了使用者交互的流暢度。

- 整體吞吐能力:在高負載下,系統總體處理效能提升約 34% ~ 36%,有效克服了單機架構的效能瓶頸。

實測出來,說真的如果你只是要大 VRAM 可以接受推論很慢 且 大多數都是使用 精度4(FP4) 模型,那你很適合這台。

最近 macOS 26.2 新功能 RDMA over Thunderbolt 5 可以嘗試看看 Mac Studio 照理來說各項數值因該都是超越這台,但價格同時也是甩的遠遠的。

但 Mac 有一個坑是目前 cluster 還是用 EXO,身為一個從 EXO 開源那時候用,我個人是覺得超級不穩,如果有人用新版的也歡迎告訴我目前能用嗎?

個人覺得有錢還是買 GPU Server 就好別想不開,除非你想玩個新玩具。

Reference #

NVIDIA - connectx-7-nic-in-dgx-spark

Linkedin - Andrew M._Connecting Two Sparks

jeffgeerling - 1.5 TB of VRAM on Mac Studio - RDMA over Thunderbolt 5